Mobility

Intel | Trust Interactions

Category

Expertise

Leading the way into an autonomous future, Intel partnered with Teague to combine human-centered insights with prototyping expertise for a driverless rideshare solution.

Challenge

Driverless vehicles headline the future of transportation, but mass deployment still faces challenges. Working at the frontline of the autonomous future, Intel wanted to pivot tech-centric myopia to an inclusive human-centered design approach. The global tech leader called on Teague to help prototype possible autonomous rideshare solutions through an iterative testing process. In a program that would inform the engineering and development of Intel’s autonomous driving platform, the team designed and tested a digital interface for driverless-rideshare passengers.

APPROACH

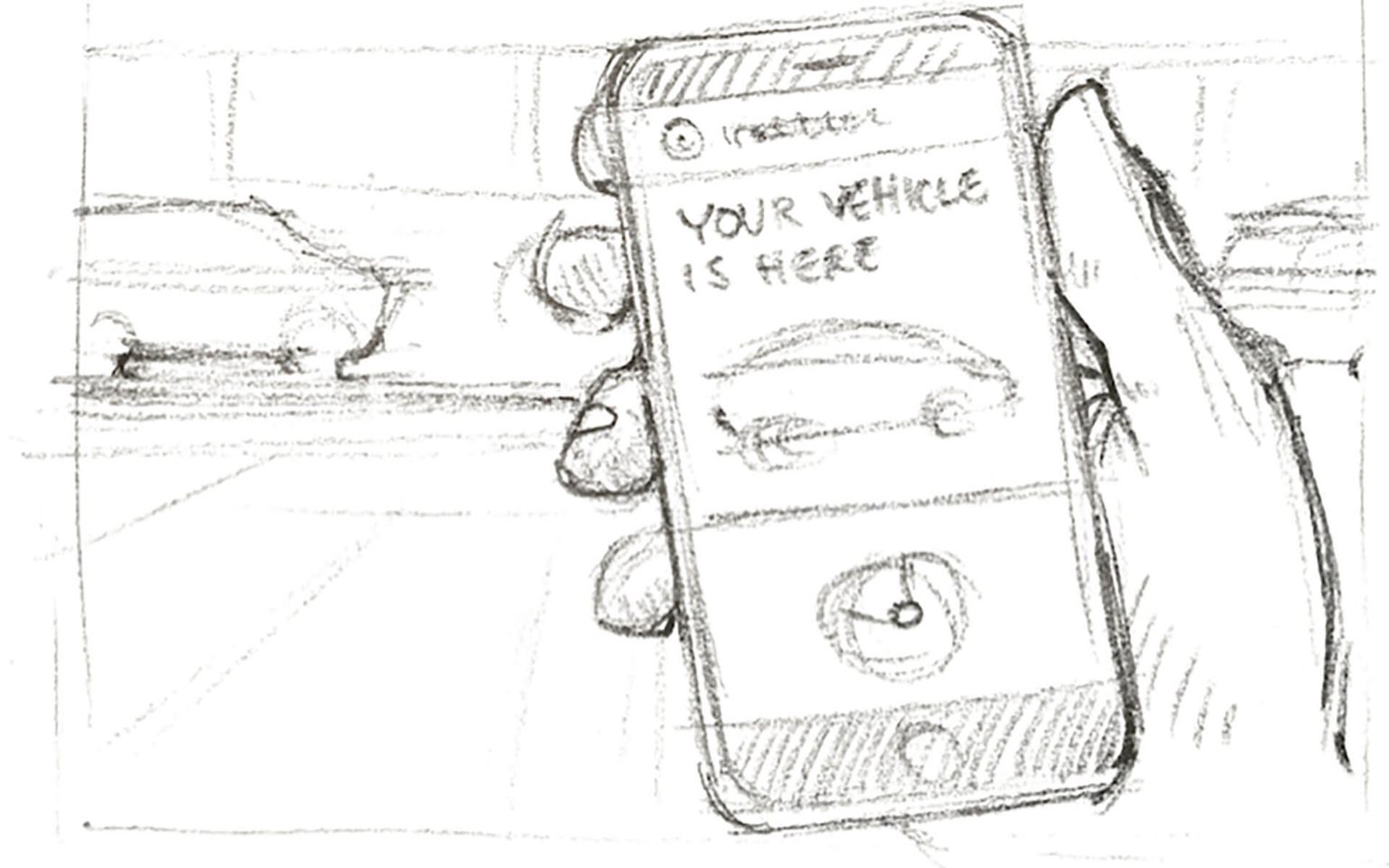

Teague kicked off the program by conducting workshops to prioritize user stories to design against based on prior research. Our futurists and designers explored the ways in which people would realistically interact with autonomous vehicles in the near future, covering the full scope of user experience, from how a person would request and approach a driverless rideshare car, to changing routes, what the vehicle communicates during a ride, and even dealing with an accident

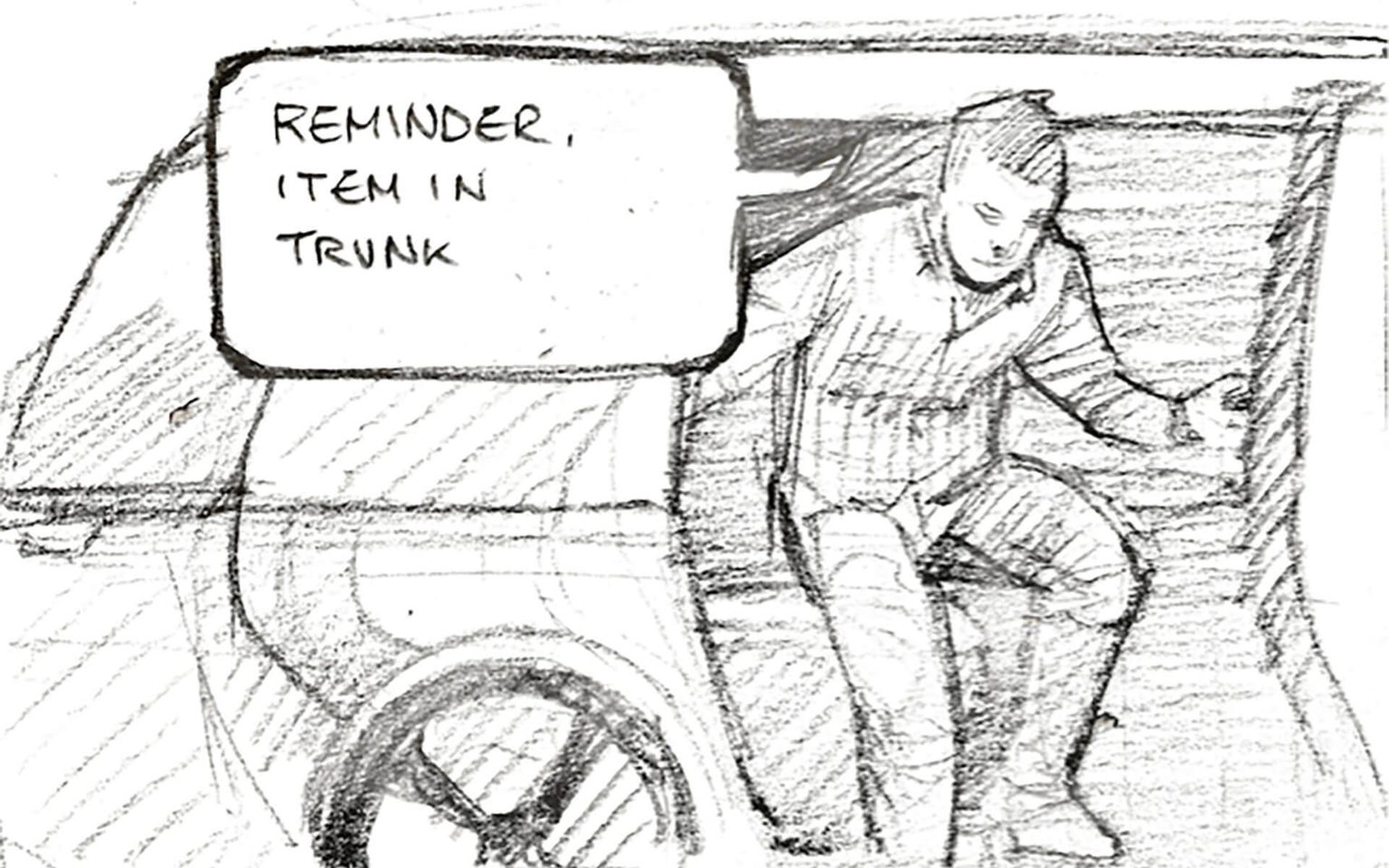

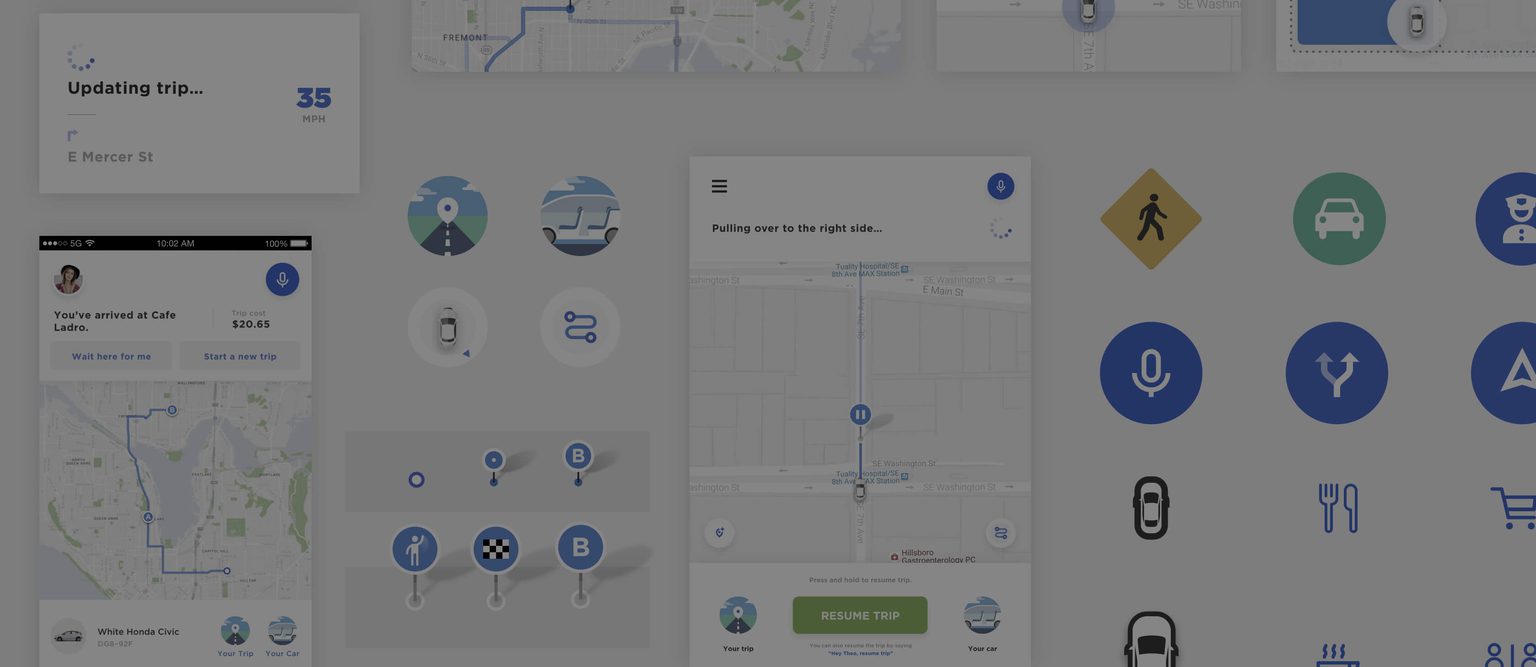

Our iterative design process progressed in cycles of prototyping and testing, going from sketches and wireframes to higher fidelity designs and immersive UI prototypes within each cycle. We began with basic prototypes on stationary bucks made of 80/20 extrusions with video emulating rideshare scenarios. At each stage of refinement, findings and insights were shared with Intel engineering and development stakeholders to identify the sensing and computing requirements for their autonomous drive platform

SOLUTION

Teague and Intel’s 18-month program culminated in a full-scale prototype vehicle complete with internal and external digital interaction experiences, running on the road in Chandler, AZ. The team’s interface prototypes gave life to Intel’s autonomous vehicle technology plans. Allowing stakeholders and users to experience and learn from potential futures. Our solution employed visual, sound, and voice cues that let users interact with the system on their terms. We had to invent digital alternatives for simple interaction we take for granted when there’s a human driver onboard (things like “which airline are you flying?” or “would you like to be dropped off in front or at the corner?” had to be rethought when the interaction was completely digital).

With code designed for redeployment, the team ported the technology from our stationary prototype into autonomous cars at Intel’s Arizona campus, where we tested on real roads. We tested our designs as they related to building trust with users. These findings were formalized for Intel in a deliverable we call Trust Interactions, which pinpoint and prioritize what information and assurances people really need in order to trust in AV technology.

Teague is an excellent partner for exploring new experiences that people will have with technology. The teams I’ve collaborated with are smart, curious, flexible, and eager to roll up their sleeves and dig into the tough interaction and design problems that invariably arise.

Matt Yurdana

User Experience Director, IoT Experiences | Intel

RESULT

The digital autonomous vehicle program was just the latest chapter of Teague’s twenty-year relationship with Intel. Our team worked closely with the company on building out its vision for a driverless future, improving the capabilities of its autonomous vehicle platform and taking it closer to reality. Our technologists, futurists, and designers have participated in multiple speaker engagements alongside Intel’s leadership, and Teague has supported the tech giant’s presentations on the progress and details of their autonomous driving innovations at the Consumer Electronics Show (CES).