The Boeing Company

A new era of sustainable aerospace.

Lighting the path to a carbon-free future for commercial aviation.

Innovation starts with shared aspirations for a new and better world. Your ambitions demand a special partner, and that's why we exist. We’re an independent design consultancy collaborating with clients like you to solve the complexities and impossible tensions at the heart of any project capable of changing the world.

Conversations with our experts

From designing future space stations to understanding sex in zero gravity, Teague Creative Director Jacqui Belleau talks about space as the next frontier for design and innovation.

Our clients in their own words

The quality of work Teague produces, combined with the perfect blend of grounded research and visionary design, and the professional packaging and delivery of the resulting work is everything we need in a design partner.

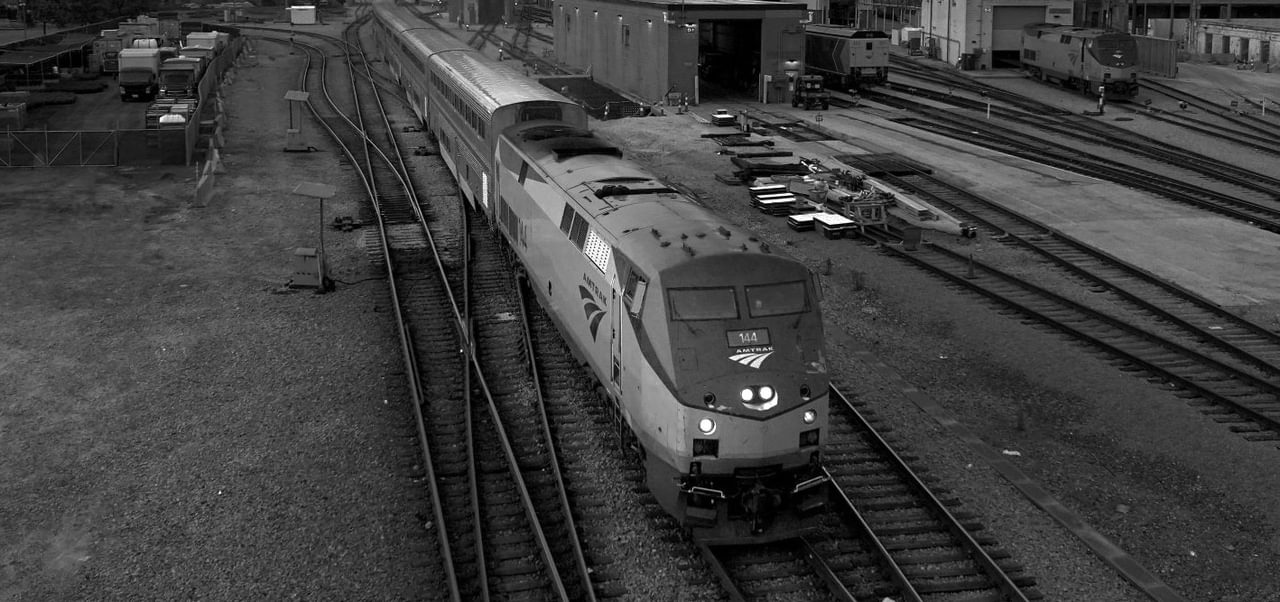

Anthony D. Paul

Director of Strategic Foresight | Amtrak